It's about the strategy, stupid

"What can you see in the data?"

The client asked me this while I was scanning through their analytics setup. Ten curious eyes were looking at me, waiting.

I was actually looking for something completely different. I wasn't hunting for insights. I was just checking the shape of the data - what kind of events they're collecting, how things are structured. My usual "let me get an overview" check that I do at the start of every project.

But the group sitting around me was expecting something else entirely. They wanted answers. The data should tell us something about the business, right?

I closed my laptop.

This question has followed me since day one of working with analytics setups. And I can tell you - for the first two or three years, I always tried to give something. I'd point at the screen and say things like "the bounce rate here looks worth investigating" or "I'm not sure about this landing page performance." I wanted to deliver. I didn't want to say what was actually true: I have no idea.

When you take a first look at a dataset, you have no idea. It's actually the wrong thing to do.

It took me six or seven years to understand why I couldn't tell anything meaningful from that initial scan. Nowadays, I do it completely differently. I don't look at the data anymore. Not at the start.

When clients ask me in initial workshops if I want to see their analytics account or look at their dataset, they get confused when I say no. "Why don't you want to see the data?"

Because right now, I'm not interested in your data. I'm interested in understanding how your business works.

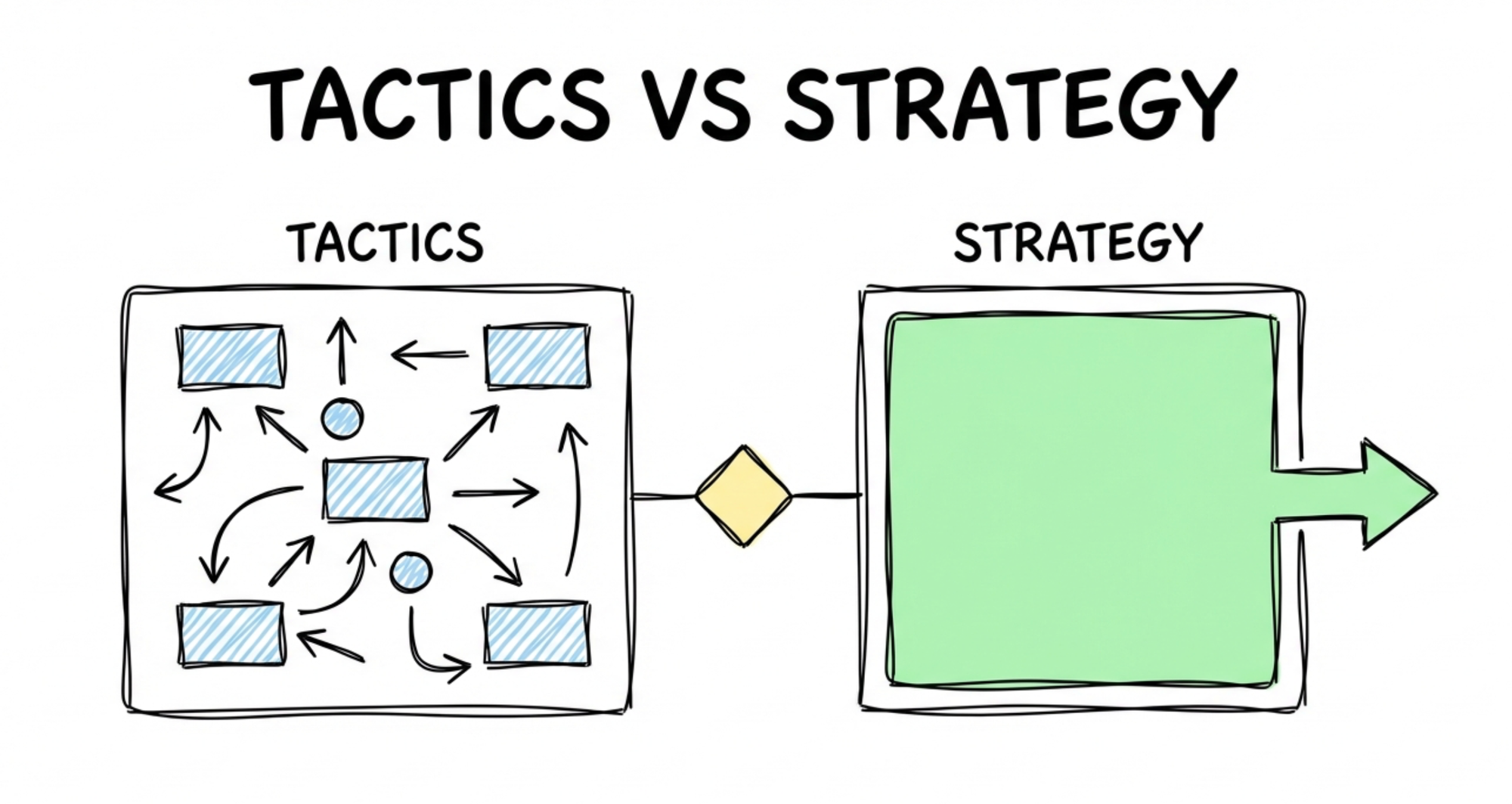

The tactics trap

Analytics content is almost always tactical. Scroll through LinkedIn and you'll see it everywhere: "We just moved the client to server-side tag management and tracking improved significantly." "We're using this specific retention analysis and it gave us this insight." "Here's how you should measure your data."

These aren't wrong. But they're usually at the end of something else. Hopefully, they're at the end of something else - and not just ticking off boxes from a catalog of what you can do.

Unfortunately, most of the work in this space is exactly that. We have backlogs filled with tactics that we deploy because they're on the list, not because they're the right thing for this specific situation.

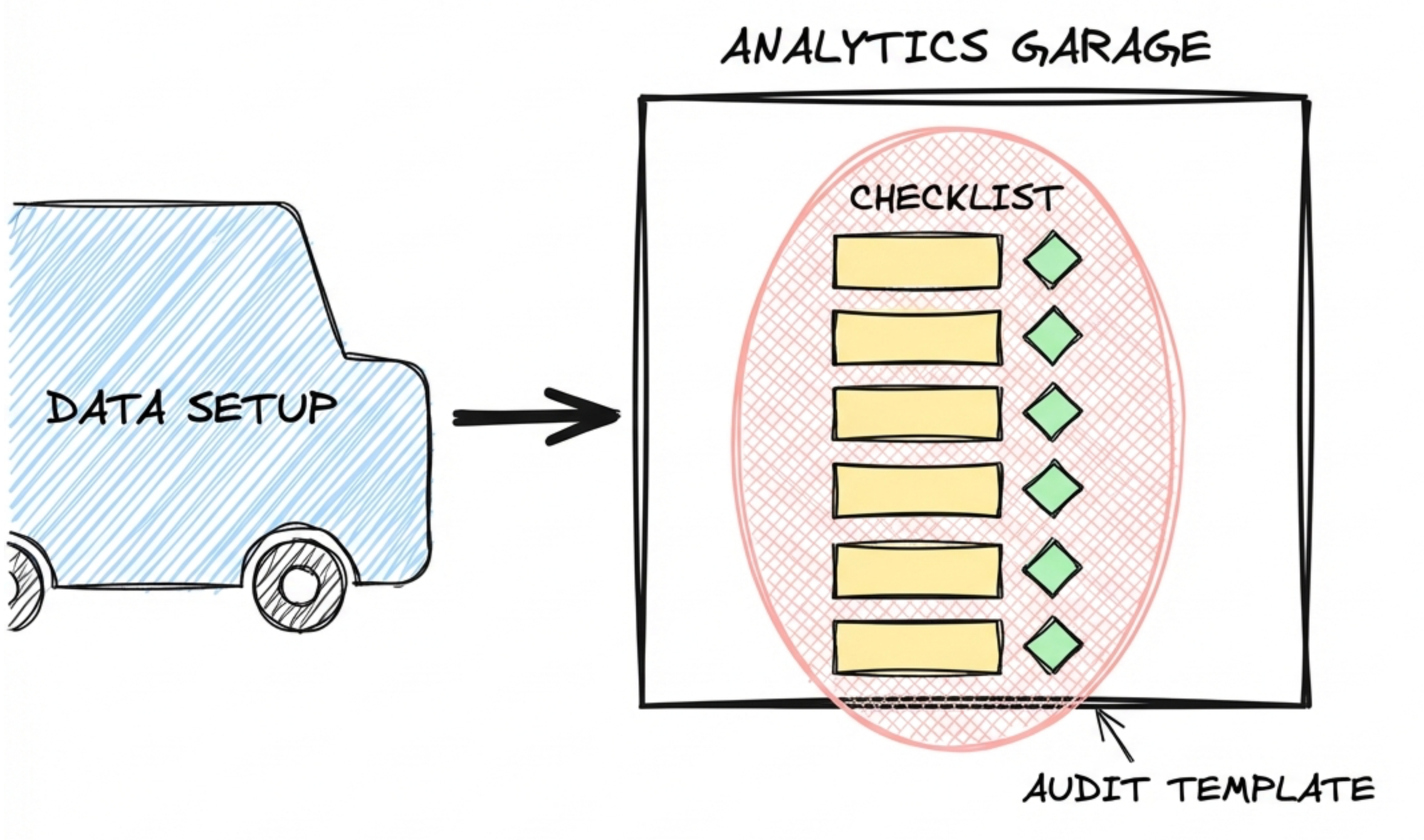

The checklist approach

I can still remember my first four to five years of analytics projects. We always had templates for analytics audits. Over time, these templates became quite sophisticated. You make experiences, you find weird stuff, you add it to the list. Eventually, you have a comprehensive document that covers everything.

The process works like a car check. Someone drives their data setup into your garage. You take your checklist, go through all the different things, tick your boxes, write short summaries. "This looks okay." "This is maybe not so good." "This needs attention."

This is still valuable work. There are enough cases where these audits discovered severe problems. Things that genuinely needed fixing.

But here's the issue: it's often presented as the one thing you have to do. The checklist becomes the strategy. You go through the list, you find things that don't match best practices, you recommend fixing them. And none of it is wrong - no one is faking these results. The audit will discover real problems in a setup. The recommendations will be technically valid.

Take my favorite candidate: server-side tagging. People who know my content already know this example. Recommending server-side tagging isn't wrong. It won't produce bad data. But the question remains: are we actually doing the right thing? Or are we just playing the standard program? Or to make it worse, are we just selling hours?

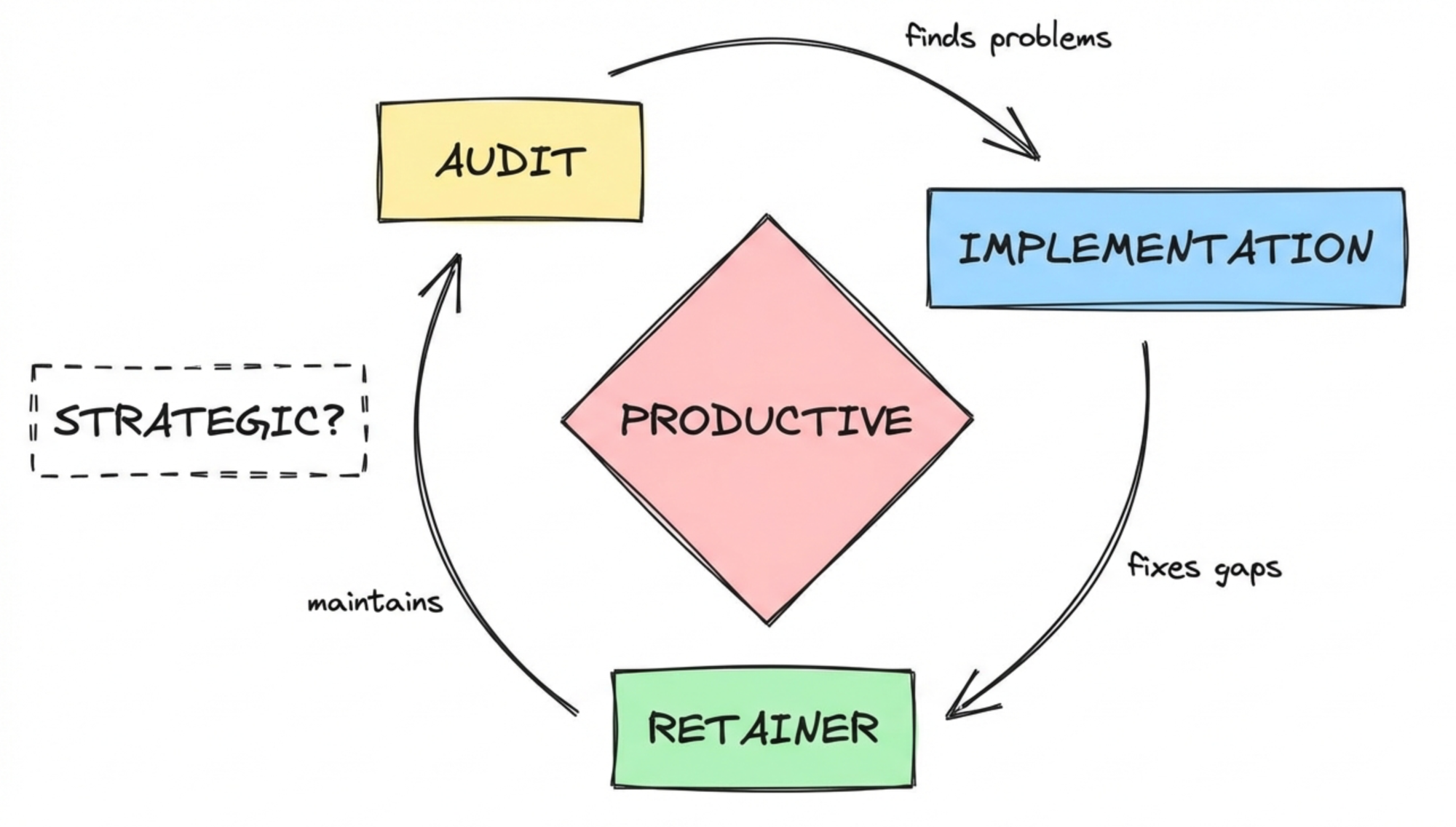

The consultant triangle

There's a classic pattern in analytics consulting. You do an audit to show people what they're missing. Then you sell an implementation project to fix the things you found. Then you hope they stick around on a retainer.

It can become a small, self-sustaining world. Nothing on these lists is wrong. The audits discover real problems. The implementations address real gaps. The retainer keeps things maintained. Everyone feels productive.

But productive isn't the same as strategic.

Let me give you a counter-example. Together with Barbara, I run FixMyTracking, where we also do audits. We also go through measurement setups and identify parts that aren't implemented well. The output looks similar on the surface.

The difference is how we frame these projects.

First, we only analyze ad platform measurement - not general analytics tracking. Why? Because when you improve measurement for advertising platforms, you see the immediate impact. Technical fixes directly translate into performance improvements, and you can verify them quickly by checking your costs or performance metrics.

Second, one of our most important criteria is ad spend. We check how much the client is actually investing in the channels we'd be working on. All this tinkering and checkbox-ticking doesn't make sense if advertising plays a 10% role in their business. We want to make sure the channels we're optimizing are actually a driving force in acquisition.

Same type of work. Same tactics. But filtered through a strategic question: will this actually matter for this specific business?

And that's the bridge. That's what all these tactics are missing.

The strategy.

So what is the strategy here?

If tactics are the problem, strategy is the answer. But what does that actually mean in practice? It's not about writing strategy documents or having off-site meetings. It starts much simpler: with the questions you ask before you touch any data.

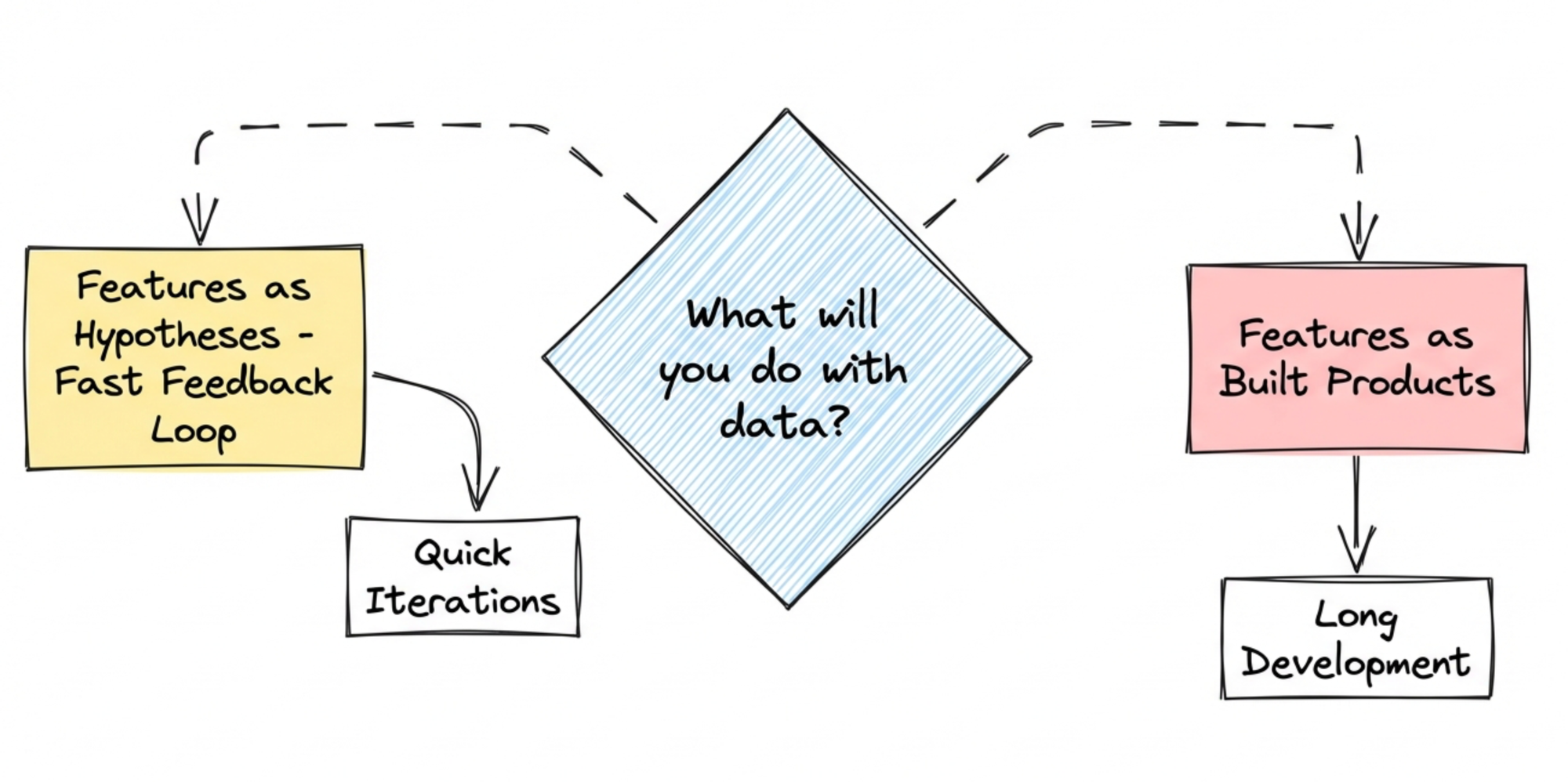

What will you do with this?

The simplest implementation of strategy is to ask what you're actually planning to do with the data.

When I work with product teams, one of the things I want to understand is what role data will actually play in their daily work. I want to understand how they make backlog decisions. I want to understand how they treat features.

Do they treat features as hypotheses - where they need fast feedback to decide how much more time to invest? Or do they treat features as building blocks that will be built and maintained anyway?

This makes a big difference.

If you build features for features' sake, then data about how those features are used, how they got adopted, what role they play in retention or revenue - that data has a different weight. The feature will be built or maintained regardless. I'm not saying data is useless here. But the impact will be smaller and slower.

Compare that to treating features as clear hypotheses. Here, you want feedback as fast as possible. This feedback is essential to decide: do we invest more, or do we stop? The data setup matters in a completely different way.

Or maybe you already know this feature is strategically important. You're committed. But you want to use data to make it work - to optimize, to find problems early, to prove impact.

Same data collection. Three very different levels of impact depending on how the team actually operates.

The two levels of the question

There's a trick that gets posted on LinkedIn once or twice a month. When the business asks your data team for a specific report or metric - something urgent, something they need immediately - you ask a simple question back:

"Okay, we'll calculate this metric. And let's say it drops by 20% from one week to the next. Or it increases by 30%. What actions will you start based on this insight?"

This already surfaces a lot. Sometimes people realize they don't actually know what they'd do. The urgency fades.

But this is only the first level of the strategy question. You're testing if there's any action attached to the insight.

The second level goes deeper: "Can you show me where this insight sits in your current strategy?"

This is a different question. Before we implement anything, I want to understand the bigger picture. Not just "what would you do if the number moves" but "how does this connect to what you're actually trying to achieve?"

Without this, you're just producing metrics. With it, you're producing something that matters.

The uncomfortable truth

These conversations don't always end well.

I've done product analytics workshops where we determined - together, during the workshop - that yes, we'll do the setup. It can still give some insights into how the product is performing. But given how this team currently operates, data won't play a significant role in their decisions.

This doesn't make teams happy. They came to the workshop believing data would make their life easier. And now someone is telling them that the setup we're building probably won't have the impact they're dreaming of.

But it's an incomplete picture if we don't talk about this. And these projects are never about changing how a team works - that's a completely different kind of effort, a much longer process.

Here, it's more about setting the scene. We can build a very effective data setup. But its impact depends on the role it will play in the operations of this team. That's not a data problem. That's a strategy problem. And it is essential to be transparent about this.

The window of opportunity

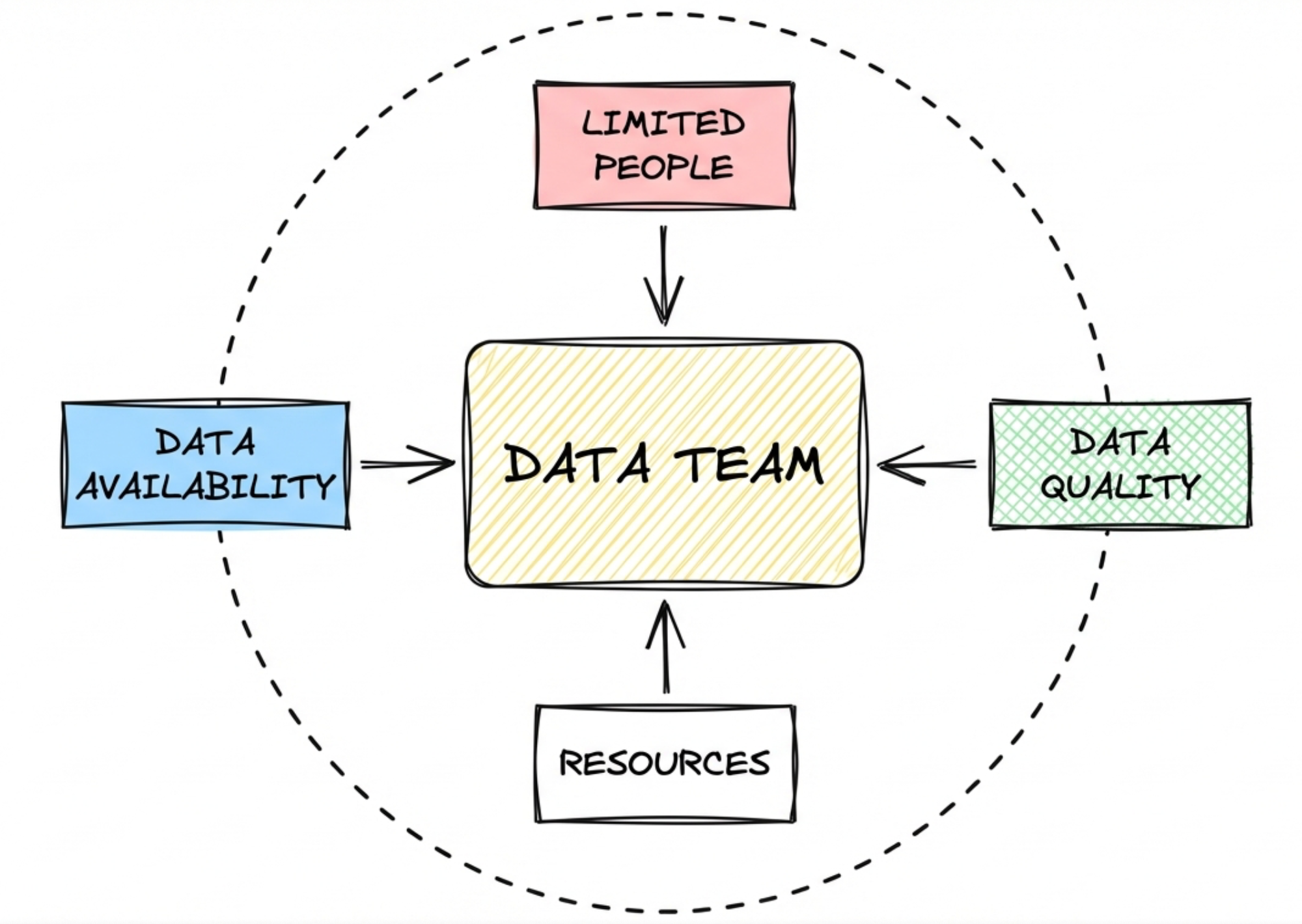

So strategy matters. But why does it matter so much for data and analytics teams specifically? Because of constraints. Every team has them, but data teams feel them acutely - and the typical response only makes things worse.

The constraint reality

Data and analytics teams, like every other team, have natural constraints. You have a specific number of people who can work on things. You have a specific amount of data that's currently available. You have a specific level of data quality. And there are probably a handful more constraints that define your situation.

The team is usually well aware of these constraints. They're trying to do their best job possible. But these constraints allow only a specific type and amount of work to be delivered.

Every data team knows its backlog. The ever-expanding, not-so-fun-to-look-at backlog with 40 or 50 items in it, some of them already two years old.

And everyone who has worked with data teams knows the other side. You have a specific need for an insight. You follow the rules. You write a ticket. And you never hear back. Maybe once or twice you ask about it. "Hey, what's happening with this issue?" The answer: "Yes, it's still in our backlog. We have some other priority items. Maybe it can make it to the sprint in a month or so."

The obvious solution is to hire more people. Unfortunately, in every setup I've seen, this never solves the problem. It just makes it bigger.

What you actually have is a small window of opportunity. After maintenance work, after the fundamental things that have to be done, there's an amount of hours left each month where you can work on real analyst work - producing insights for the business. This window is finite.

So the question becomes: what goes into that window?

In the standard approach, people drop everything into the backlog, and you pick the things from whoever shouts loudest in Slack channels or whoever is high enough in the hierarchy that ignoring them would be painful.

This is usually not the best use of your time.

The city trip

Think about going on a trip to a really nice city. One approach: you check into your hotel, and every morning you just go outside and walk around. When you see something that might be interesting, you do it.

I'm not saying this isn't a nice type of holiday. Sometimes it can be fun.

But let's say you're really interested in exploring the cool things this city has to offer. You have preferences. You have limited time. The random wandering approach won't give you an ideal output.

So what do you do instead?

You sit down. You look at what's actually possible in this city - what attractions exist. You map this to what you actually like to do. You check reviews to see if things are as good as they sound. You might ask someone you trust who's been there before, someone who thinks similarly to you. Or you ask an expert - someone who can listen to what you like and give you tailored recommendations.

Based on all this, you build an itinerary. One that fits your time, covers the things that matter to you, and makes the most of the trip.

Why don't we do the same thing for how we handle data work?

We have a small window of opportunity. We have constraints. And yet the standard approach is to wake up, walk outside, and react to whatever happens to cross our path - or whoever happens to shout the loudest.

There's a better way. And it starts with understanding where the organization is actually trying to go.

Join the party

Planning a trip is one thing. But where do you actually look to figure out what matters? For data teams, the answer is closer than you think. It's already there - in how your organization operates.

Strategy trickles down

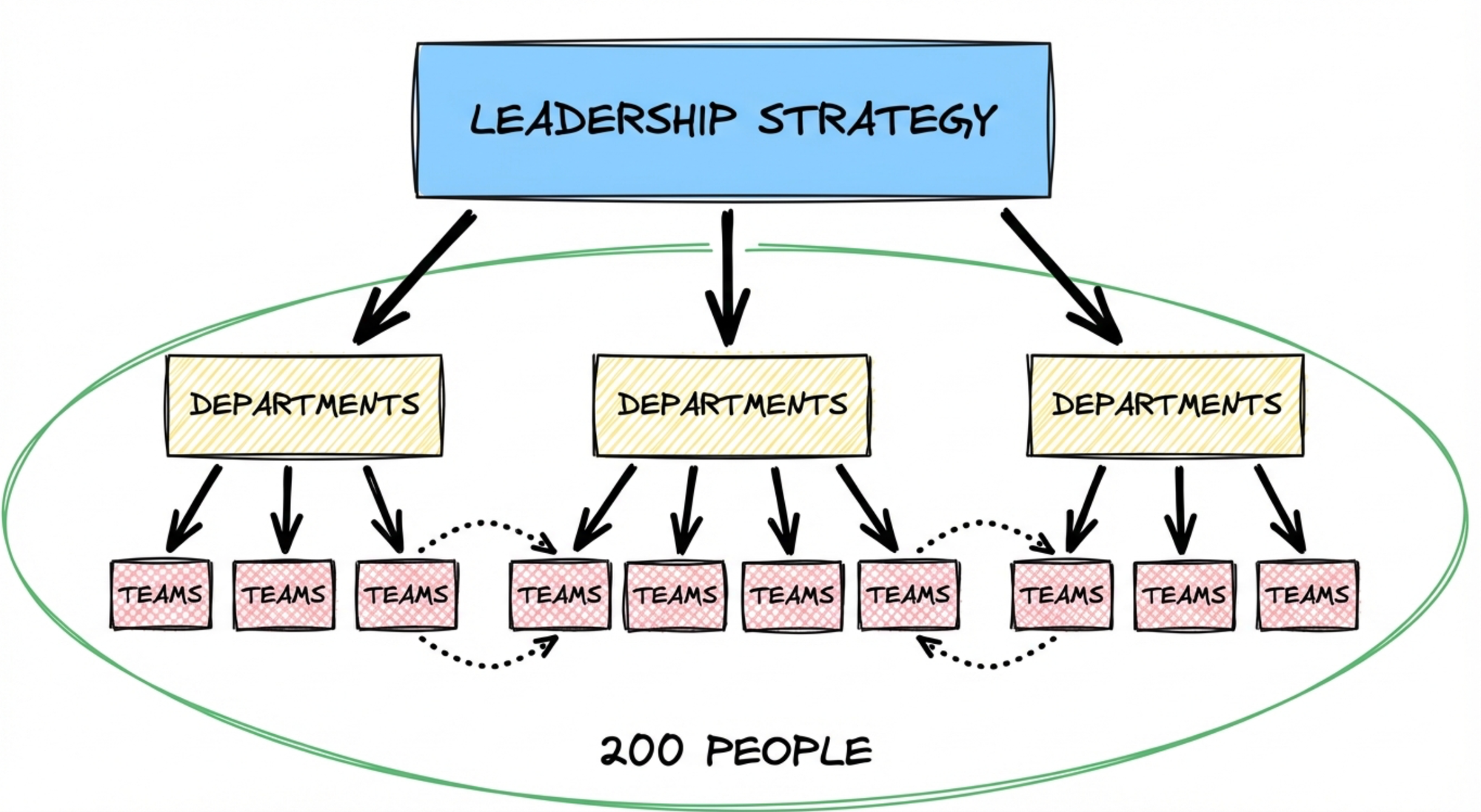

Organizations have constraints too. An organization can usually only push in one specific direction at a time. If you have 200 people all working in different directions, you're usually pretty ineffective.

This is why organizations have leadership. And the way leadership presents the direction of the company is usually called strategy.

Strategy can take different forms. Sometimes it's annoyingly vague - so vague you wonder if you can even call it strategy. Other times, it's powerful because it gives a clear direction for where things need to move.

In an ideal setup, strategy trickles down. You have a specific strategic push that the organization is focusing on for the next 6 to 12 months. Marketing builds their own strategy around this push. Product does the same.

Here's the trick: when you work in the data and analytics team, you want to be part of this party.

Since we've been talking about constraints - limited time, limited resources, needing the right data to do things - if you want to use all of this effectively, one thing that works really well is to align with the strategy the whole organization is currently thinking about.

Let me give you an example.

Say your organization is thinking seriously about how to add AI to what you're doing. Not just slapping an AI label on things, but genuinely asking: which parts of our business could be improved by current AI capabilities? How can we provide more value to our customers with this?

The organization identifies an opportunity and it becomes an organizational push. Product and development are tasked with rolling it out. Marketing is tasked with building awareness - small at first for early versions, bigger as confidence grows.

The data team now has an opportunity to join this. How can we support this strategic push?

You could develop adoption metrics to see early signals of whether this direction is working. You could create proxies to detect changes in retention patterns as people start using the new features. You could build dashboards that show early revenue signals, making transparent what's actually happening.

Maybe you even surface that revenue will take a dip because the new features improve customer experience, but the pricing model hasn't caught up yet. That's valuable. It makes things visible so the company can adapt - maybe introduce different pricing later. Data helps make this transparent.

When you focus on the strategic direction the company is taking, you're working on something where effort and resources are already flowing. You can make a difference. You can make an impact.

The simple contrast

Imagine the company has been working on this new initiative for months. Everyone contributed. And then someone asks the data team: what did you do during this time?

"We implemented server-side tagging. We can now track 3% more people on our website."

Everyone nods politely. Sounds good, technically. But you won't get applause for that.

Compare that to: "We built adoption metrics for the new AI features. We created early signal dashboards for retention impact. We surfaced that revenue will dip initially and helped frame the pricing conversation. We made visible what was working and what needed attention."

Same data team. Same constraints. Same window of opportunity.

One played the standard program. The other aligned with what the organization actually cared about.

Let me return to that moment. Ten curious eyes. The closed laptop.

The reason I changed my approach so significantly is this: when I do these projects now, I don't look at any data. I don't analyze what's there. I'll look at what data is available - but much later. Only after we've developed a plan for where we want to go, what we want to measure, and how we can support the business best.

Then I look at the data to see what's already available. What can we pick from there? Where's the gap between what we want to achieve and what's currently measured?

That's when the data becomes useful.

Where I spend most of my time now is at the beginning - understanding how the business works. When I talk with marketing, I want to know: where do you build awareness? How do you support first-attracted accounts in their discovery phase? How do you convert them into your onboarding? I want to understand the essential touchpoints. The levers.

And then: what's your strategy? What were you pushing for last year? How well did it work? Do you know why it worked or didn't? What's your strategic push for the next couple of months?

This gives me the picture I need. The one or two areas where improving the data setup can actually move things.

It's never precise science. Sometimes we just know that we need to make things visible first before we can take the next step. That's fine. Because we know the direction.

Interestingly, in these setups, no one asks me anymore what I can see in the data.

Because we've already shifted the question.

Not "what can you see?" but "what do we actually want to see?"